You can ask your doubts or any suggestions in the comment. It is a very useful technique to avoid overfitting.

#Keras data augmentation mnsit how to#

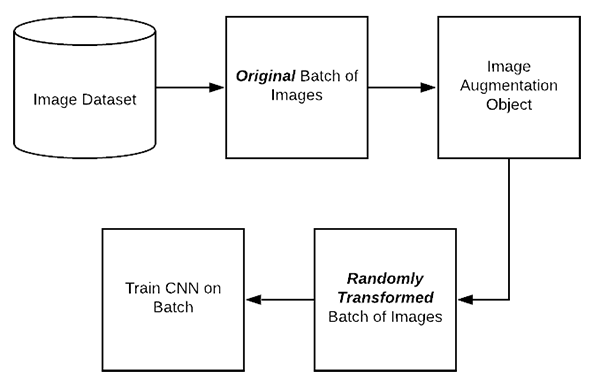

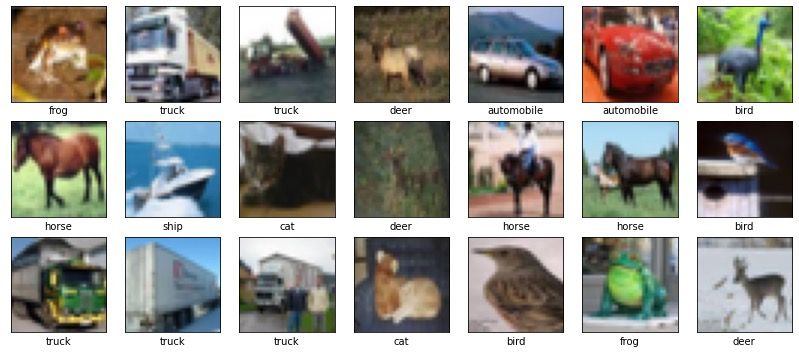

Wrapping up!! We have learned about data augmentation, its use, and how to use it. #plotting the transformations applied to the image.īy the output, we can observe transformation such as: Transformed_image = augmentation.flow(aug_img) #applying the transformation on the image #data augmentationĪugmentation = ImageDataGenerator(rotation_range=25, width_shift_range=0.2, We will use some of the transformations on the image. #plotting the imageįinally, we will perform data augmentation and it has various transformations such as width shift, zoom, flip, and many more. Lets us first see how the original image looks like. Now, We will read an image by either writing the name of the image or by passing the complete path of the image. import numpy as npįrom import ImageDataGenerator Load the MNIST dataset with the following arguments: shufflefilesTrue: The MNIST data is only stored in a single file, but for larger datasets with multiple files on disk, it's good practice to shuffle them when training. How to do Data Augmentation in Python using Keras TensorFlow API?įirstly, We will import all the necessary Python libraries that are required for the task. The Better performance with the tf.data API guide Load a dataset. It generates batches of tensor image data with real-time data augmentation. In this blog, We will perform Data Augmentation on Images using the Keras ImageDataGenerator class. So, in order to get more data, we do data augmentation, which creates an artificial but realistic dataset. But, it is not cost-effective if you making software. In this, We collect data such as images from the internet. So, In order to increase the amount of training data, we can use Web Scrawling. We need a lot of data, in order to make a good deep learning model. Why use Data Augmentation?ĭeep Learning Algorithms are data-hungry. Note: It is only applied to the Training set and not on the Validation set or the Test set because the training set is used to train the model and validation and test set are used for the testing of the model. As a result of this, A new dataset is made that contains data with the new transformations. What is Data Augmentation?ĭata Augmentation is a technique that is used to increase the diversity of the training set by applying various transformations and it increases the size of the data present in the training set. How to do Data Augmentation using Keras in Python.Lambda image, label: (tf.nvert_image_dtype(image, tf.float32),label)Īdding the link suggested by H that gives end-to-end example on data augmentation that also uses mnist dataset. Your get_dataset function will look like below - def get_dataset(batch_size=200): Similarly you can use other functionalities like random_flip_up_down, random_crop functions to Randomly flips an image vertically (upside down) and Randomly crop a tensor to a given size respectively. Lambda image, label: (tf.image.random_contrast(image, lower=0.0, upper=1.0), label) Lambda image, label: (tf.image.random_flip_left_right(image), label) Lambda image, label: (tf.nvert_image_dtype(image, tf.float32), label) Number of images increased by twice by repeat which repeat all the steps.randomly change contrast of image using random_contrast.randomly flip left_to_right each image using random_flip_left_right.cache() results as those can be re-used after each repeat.convert each image to tf.float64 in the 0-1 range.

You can add below functionality in your function def get_dataset. The tf.image module contains various functions for image processing. Not surprising, since, usually, train_x and train_y are fed as two arguments to the flow function, not "packed" into one tf.dataset.Dataset. Steps_per_epoch=len(train_dataset) / 32, epochs=20) Model.fit_generator(data_generator.flow(train_dataset, batch_size=32), I've tried the following and it didn't work.

So, how can I use here Data Augmentation here?Īs far as I know, I can't use the tf.keras ImageDataGenerator, right? Train_dataset, test_dataset = get_dataset() Test_dataset = mnist_test.map(scale).batch(batch_size) Train_dataset = mnist_train.map(scale).shuffle(10000).batch(batch_size) Mnist_train, mnist_test = datasets, datasets

#Keras data augmentation mnsit code#

More specifically, my code so far is: def get_dataset(batch_size=200):ĭatasets, info = tfds.load(name='mnist', with_info=True, as_supervised=True, How can I then use Data Augmentation on such a dataset? In order to use Google Colabs TPUs I need a tf.dataset.Dataset.